February 14, 2023 | By Anik Bose, General Partner at BGV and Founder of EAIGG

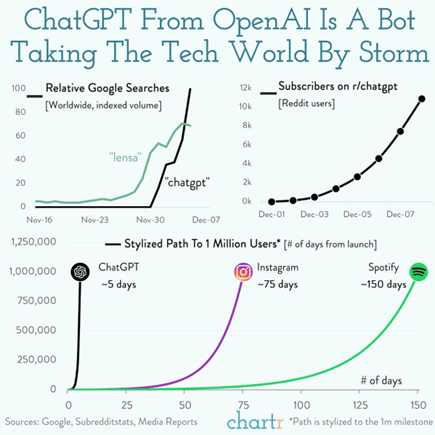

The release of ChatGPT has catalyzed a literal “Generative AI” storm in the tech industry. Microsoft’s investment in OpenAI has made headlines as a potential challenger to Google’s monopoly in search. According to Pitchbook, VCs have increased investment in Generative AI by 425% since 2020 to $2.1bn. “Tech-Twitter” blew up and even mainstream BBC reported on it. Now ChatGPT is too full to process queries most of the time.

As a General Partner at BGV, Human-centric AI is a foundational core of our vc investment thesis, and we have dug deeply into the challenges of building B2B AI businesses in our portfolio as well as how disruptive venture scale businesses can be built around Generative AI.

As a founder of the Ethical AI Governance Group (EAIGG), I share a mutual passion with fellow members around best practice sharing for the responsible development and deployment of AI in our industry. I decided to put ChatGPT to the test on the topic of human centric AI by asking two simple questions: a) What are the promises and perils of Human AI?; b) What are important innovations in Ethical AI? I contrasted ChatGPT’s responses with what we have learned from subject matter experts on the same set of questions (Erik Brynjolffson in Daedelus (2022) titled the Turing Trap on the promises and perils of AI, see link here) and (Abhinav Ragunathan published a market map of Human Centric Ethical AI startups (EAIDB) in the EAIGG annual report (2022). See link here).This analysis combined with BGV investment thesis work around enterprise 4.0 and ethical AI governance led me to several conclusions that are contrary and more nuanced than what the generic media narrative about ChatGPT and Generative AI in general suggests:

- ChatGPT represents a tremendous area of innovation but it will not replace Google search engine overnight:

- The answers are generic, lack depth and are sometimes wrong. Before trusting ChatGPT responses implicitly, users will need to confirm the integrity and veracity of the information sources. Already, StackOverflow has banned ChatGPT, saying: “Overall, because the average rate of getting correct answers from ChatGPT is too low, the posting of answers created by ChatGPT is substantially harmful to our site and to users who are looking for correct answers.” Given the effort required to verify responses, ChatGPT’s chatbot is not prepared to penetrate enterprise (B2B) use cases. As unverified and inaccurate data sets increasingly proliferate, ChatGPTs “garbage in equals garbage out” output will quickly expose the shaky data foundation on which its answers rely

- Putting aside the matter of accuracy, there is also the question of sustainable business models. While ChatGPT is free today, running GPUs is expensive, so profitably competing at scale with Google search engine is a tall order.

- Google will not stand still, they have already launched Bard with machine and imitation learning to “outscore” ChatGPT on conversational services. We’ve only seen the opening act in a much larger showdown.

- Generative AI tools like ChatGPT can augment subject matter experts by automating repetitive tasks to provide a baseline but will never displace them (especially in B2B use cases) because of the lack of domain specific contextual knowledge as well as the need for trust and verification of underlying data sets. For this reason, broader adoption of ChatGPT will spur increased demand for authenticated, verifiable, ground truth, fueling tailwinds for data integrity and verification solutions, alongside a whole host of ethical AI issues, like privacy, fairness and governance innovations. Ultimately, human-centric AI will emerge as the bridge to Human-Like Artificial Intelligence (HLAI).

- Generative AI will go well beyond chatbots to cover use cases like creating content, writing code (automation scripts) & debugging, generating AI art and managing and manipulating data BUT many of the first wave of generative AI startups will fail to build profitable venture scale B2B businesses unless they explicitly address a few challenges:

- Address inherent trust and verification issues

- Lack of defensible moats

- Unsustainable business models given the high costs of running GPU’s

- Wearing my vc hat of an enterprise tech investor, winning Generative AI B2B startups will fall into several categories:

- Applications – That integrated generative AI models into a user facing sticky productivity apps. Using foundation models or proprietary models as a base to build on (verticals like media, gaming, design, copywriting etc OR key functions like DevOps, marketing, customer support etc). Models – To power the applications highlighted above verticalized models will be needed. Leveraging foundation models, using open-source checkpoints can yield productivity and a quicker path to monetization but may lack defensibility.

- Infrastructure – Innovations to cost effectively run training and inference workloads for generative AI models by breaking the GPU cost curve. We will also see AI Governance solutions to address the unintended consequences of “disinformation” that will be created by broader adoption of tools like ChatGPT, as well as a wide range of ethical issues.

Today it is unclear where in the stack most of the value will accrue – Infrastructure, models, or apps. Currently, the infrastructure providers (like NVIDIA) are the biggest benefactors of OpenAI. It is also unclear where startups can break the oligopoly of the infrastructure incumbents like Google, AWS and Microsoft who touch everything. See a16Z article that does a good job expanding on this here. In the shorter term, BGV believes that most of the winning startups will be in the application layer – which make use the of the democratization of generative AI, but take it to everyday workflows by using intelligent workflow automation and leveraging proprietary verticalized data sets to provide most value to the end users in terms of productivity improvements, agility, resiliency and cost savings. There will also be opportunities for startups to innovate at the hardware layer – to break the GPU cost curve but these are likely to be more capital intensive investments.

What is increasingly clear, however, is that the surge in Generative AI interest will quickly prompt demands to prioritize human values, guardrails and ethical AI governance norms. As Abhinav Ragunathan writes, ”the modern “tech stack” will quickly evolve into an “ethics stack” in which the flow of data moves through companies [that ensure these constraints are in place].” Moreover, the subsequent innovation prompted by the Generative AI “boom” — is poised to follow a curve similar to “ethics predecessors” like that of cybersecurity in the late-2000s and privacy in the late-2010s.

Chat GPT Queries vs Thought Leader Perspectives

| Query Question | Chat GPT Response | Thought Leader Perspective |

| Query #1. What are the promises and perils of human AI ? | The promises and perils of human-AI refer to the potential benefits and risks associated with the integration of artificial intelligence into human societies. Promises of human-AI include: – Increased efficiency and productivity: AI can automate repetitive tasks, freeing up time for humans to focus on more complex and creative work. – Improved decision-making: AI can analyze vast amounts of data and provide insights that may lead to better decision-making. – Enhanced quality of life: AI can assist with tasks such as diagnosing medical conditions and providing personalized healthcare, making life easier and more convenient for people. Perils of human-AI include: – Job displacement: As AI becomes increasingly capable of performing tasks traditionally done by humans, there may be a risk of job loss and unemployment. – Bias and discrimination: AI systems can perpetuate existing biases and discrimination if they are trained on biased data. – Privacy concerns: AI systems can collect and store large amounts of personal data, raising concerns about privacy and security. – Loss of human control: As AI systems become more autonomous, there is a risk that humans may lose control over their actions and decisions, leading to unintended consequences. In conclusion, the integration of AI into human societies holds both promises and perils, and it’s important for society to carefully consider the potential impacts and take steps to minimize the risks. | Erik Brynjolffson (Stanford HAI) authored an insightful thought piece in Daedelus (2022) titled the Turing Trap on the promises and perils of AI, see link here. Key takeaways summarized by Anik from the thought piece are: – The benefits of human-like artificial intelligence (HLAI) include soaring productivity, increased leisure, and perhaps most profoundly a better understanding of our own minds. But not all types of AI are human-like–in fact, many of the most powerful systems are very different from humans–and an excessive focus on developing and deploying HLAI can lead us into a trap. – The breakthroughs in robotics depend not merely on more dexterous mechanical hands and legs, and more perceptive synthetic eyes and ears, but also on increasingly human-like artificial intelligence (HLAI). – Powerful AI systems are crossing key thresholds: matching humans in a growing number of fundamental tasks such as image recognition and speech recognition, with applications from autonomous vehicles and medical diagnosis to inventory management and product recommendations. – Automation increases productivity. Moreover, there are many tasks that are dangerous, dull, or dirty, and those are often the first to be automated. As more tasks are automated, a fully automated economy could, in principle, be structured to redistribute the benefits from production widely, even to those people who are no longer strictly necessary for value creation. – Government policy could do more to steer the economy clear of the Turing Trap. The growing use of AI, even if only for complementing workers, and the further reinvention of organizations around this new general-purpose technology imply a great need for worker training or retraining. In fact, for each dollar spent on machine learning technology, companies may need to spend nine dollars on intangible human capital. – The risks of the Turing Trap are increased not by just one group in our society, but by the misaligned incentives of technologists, businesspeople, and policy-makers. The future is not preordained. We control the extent to which AI either expands human opportunity through augmentation or replaces humans through automation. |

| Query #2: What are important innovations in Ethical AI? | There have been several important innovations in ethical AI, including: – Fairness: Techniques to ensure that AI systems are fair and unbiased, such as algorithmic auditing and debiasing. – Explainability: Methods to make AI systems more transparent and explainable, such as interpretability and model explanation techniques. – Privacy: Tools to protect the privacy of individuals, such as differential privacy and federated learning. – Accountability: Frameworks for holding developers and users of AI systems accountable for their actions, such as responsible AI governance. Human-centered design: Incorporating the values and needs of the people who will interact with AI systems into the design process, such as user-centered design and participatory design. | Abhinav Ragunathan (EAIGG community member) published a market map of Human Centric Ethical AI startups (EAIDB) in the EAIGG annual report. See link here. Key takeaways summarized by Anik were: – Demand for ethical AI has skyrocketed, and public discussion has internalized the challenge, and is moving towards innovative solutions. A nascent, sometimes opaque, ecosystem of ethical AI startups has emerged to drive solutions to ethical challenges. – They are organized across 5 broad category areas: 1. Data for AI — any treatment or manipulation done to data sets, including generating synthetic data, done to preserve data privacy 2. ModelOps, Monitoring & Explainability — specific tools that assist in the governance and lifecycle management of production machine learning. This includes detecting bias and offering monitoring and explainability. 3. AI Audits and GRC — consulting firms or platforms that help establish accountability, governance and/or business risk/compliance 4. Targeted AI Solutions — companies solving ethical AI issues for a particular niche or vertical, i.e. insuretech, fintech, healthtech, etc 5. Open-Sourced Solutions — fully open-source solutions meant to provide easy access to ethical technologies and responsible AI. – As the space grows, we anticipate the following key trends: 1. Consulting firms may decline in popularity and MLOps / GRC platforms may rise due to the ability to programmatically enforce compliance given concrete metrics. 2. Incumbents will start incorporating less effective versions of bias-related technology in an effort to keep their platforms viable in this new world of bias-conscious policy. They lack the specialty, expertise, and first-mover advantage of these startups but have a well-established client base to draw from. 3. The modern “tech stack” will quickly evolve into an “ethics stack” in which the flow of data moves through companies in the aforementioned categories. For example, a company might employ Gretel for data privacy and synthetic data, Arthur for MLOps management and bias detection, then Saidot for model versioning and governance. 4. The “boom” for ethical AI is estimated to be somewhere from the mid- to late-2020s and will follow a curve similar to “ethics predecessors” like that of cybersecurity in the late-2000s and privacy in the late-2010s. |